In the contemporary digital landscape, the emergence of "deepfakes" hyper-realistic media generated by artificial intelligence has introduced a formidable challenge to our perception of reality. By utilizing sophisticated machine learning algorithms known as Generative Adversarial Networks (GANs), deepfake technology can manipulate video and audio to make individuals appear to say or do things they never did. While the creative potential for cinema and education is vast, the nefarious applications of this technology pose a significant threat to democratic processes and individual privacy.

The primary concern lies in the potential for mass disinformation campaigns. In a political context, a well-timed deepfake video could manipulate public opinion or incite social unrest by discrediting leaders during critical election cycles. This phenomenon contributes to what scholars call "reality apathy," a state where the public becomes so cynical about the authenticity of digital content that they lose interest in discerning truth from falsehood. Consequently, the burden of proof shifts, allowing bad actors to dismiss genuine evidence as "fake," a tactic often referred to as the "liar’s dividend."

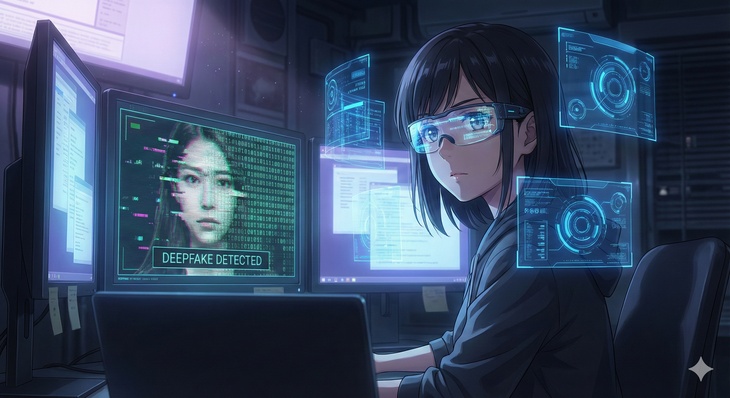

To combat this erosion of trust, researchers are developing AI-based detection tools that can identify subtle inconsistencies in deepfake media, such as unnatural blinking patterns or mismatched lighting. Furthermore, legislative bodies are beginning to propose frameworks to mandate the "watermarking" of AI-generated content. However, as the technology continues to evolve at an exponential rate, the "arms race" between creators and detectors intensifies. Ultimately, safeguarding digital integrity will require a combination of technological innovation, robust legal protections, and a public that is highly literate in media consumption.

The Erosion of Truth: Deepfakes and the Challenge to Digital Authenticity

中文翻譯

在當代數位景觀中,「深度偽造(deepfakes)」由人工智慧生成的超現實媒體的出現,對我們對現實的感知提出了嚴峻的挑戰。透過利用被稱為生成對抗網路(GANs)的高級機器學習演算法,深度偽造技術可以操縱視訊和音訊,讓個人看起來像是說了或做了他們從未做過的事。雖然電影和教育領域的創意潛力巨大,但這項技術的邪惡應用對民主進程和個人隱私構成了重大威脅。主要的擔憂在於潛在的大規模假訊息活動。在政治背景下,一段時機巧妙的深度偽造影片可能會在關鍵選舉期間透過抹黑領導人來操縱輿論或煽動社會動盪。這種現象促成了學者所說的「現實冷感」,即大眾對數位內容的真實性變得如此憤世嫉俗,以至於失去了區分真偽的興趣。因此,舉證責任發生了轉移,讓不良行為者得以將真實證據斥為「偽造」,這種策略通常被稱為「說謊者的紅利」。

為了對抗這種信任的侵蝕,研究人員正在開發基於人工智慧的檢測工具,可以識別深度偽造媒體中細微的不一致之處,例如不自然的眨眼模式或不匹配的光影。此外,立法機構正開始提議強制要求對人工智慧生成的內容進行「浮水印」處理的框架。然而,隨著技術以指數級速度持續進化,製作者與檢測者之間的「軍備競賽」日益激烈。最終,維護數位完整性將需要技術創新、完善的法律保護以及具備高度媒體素養的大眾共同努力。

🔑 重點單字 (Vocabulary)

- formidable adj.. 嚴峻的;難以對付的

- manipulate v.. 操縱;控制

- nefarious adj.. 邪惡的;不法的

- disinformation n.. 虛假訊息

- authenticity n.. 真實性

- apathy n.. 冷淡;漠不關心

- dividend n.. 紅利;好處

- inconsistency n.. 不一致;矛盾

- exponential adj.. 指數級的

- integrity n.. 完整性